In one my current projects I had to configure a TFS deployment for my customizations I wrote for AD FS and FIM. Setting up a deployment (build) in TFS seems pretty straightforward. The most complex thing I found was the xaml file which contains the logic to actually build & deploy the solutions. I picked a rather default template and cloned it so I could change it to my needs. You can edit such a file with Visual Studio which will give you a visual representations of the various flows, checks and decisions. After being introduced to the content of such a file by a colleague of mine I was still overwhelmed. The amount of complexity in there seems to shout: change me as little as you can.

As I have some deployments which require .NET DLL’s to be deployed on multiple servers I had some options: modify the XAML so it’s capable of taking an array as input and execute the script multiple times, or modify the parameters so I could pass an array to the script. I opted for the second option.

My first attempt consisted of adding an attribute of the type String[] to the XAML for that deployment step. In my build definition this gave me a multi valued parameter where I could enter multiple servers. However in my script I kept getting the value “System.String[]” where I’d expect something along Server01,Server02 This actually made sense, TFS probably has no Idea it needs to convert the input to a PowerShell array.

So I figured if I use a String parameter in the build and if I feed it something like @(“Server01”,”Server02”), which is the PowerShell way of defining an array.

Well it did, but not exactly like we want it. The quotes actually screwed it up and it was only available partially in the script. So we had to do some magic. Passing the parameters to the script means you pass though some vb.net code. This is some simple text handling code, and all we need to do for this to work is add some quotes handling magic. Here’s my test console application which tries to take an array as input and make sure I got the required amount of quotes on the output.

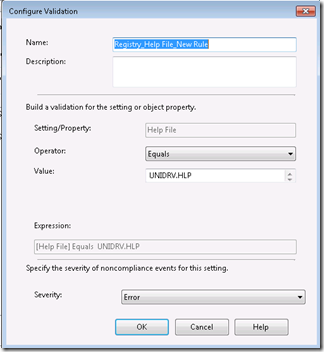

Here’s the TFS parameter section where we specify the arguments for the scripts. The magic happens in the “servers.Replace” section. We’ll ensure that quotes “survive” be passed along to the PowerShell script.

String.Format(" ""& '{0}\{1}' '{2}' {3} "" ", ScriptsFolderMapping, BuildScriptName, BinariesDirectory, Servers.Replace("""", """"""""))

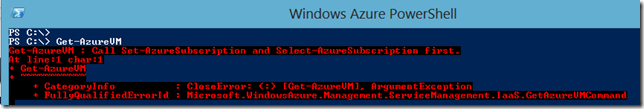

In the GUI this goes into the “Arguments” field:

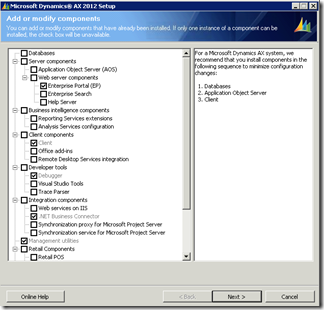

This allows us to configure the build definition like this. Which is actually pretty simple. Just put the array as you’d put it in PowerShell.

P.S. Make sure to either copy paste or count those quotes twice ; )

![clip_image002[4] clip_image002[4]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEj7ojS863E48jYdozF5ldHDxPfTCDxRNnjZ1UDya9quog6uQAhuSn0SSDJIgygNkGSFTpCPai8JWDSTbvv4YSCEduDbS7pKnAlIVYPn8F3IzXv20VnXHRQGZXHcCQxa-Rn1Cu9R-1EiPg/?imgmax=800)

![clip_image002[6] clip_image002[6]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEjS9JIkPnjqhKgY-43zFvt0Sktgn-6m7AcqzQgFXbiq1GoOVXKy396eG3oN6_jRAnHcGITY1_gpxqK4ruorOOlcG9fUwNyb_tMAhcJlG8QeXDiR5lF87u8pCcE0S3s8wm8YVMdh-aTZ6A/?imgmax=800)

![clip_image002[8] clip_image002[8]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhOsvM4v-8gWqdaNoEFlEttsU1TwrKWetCD78DLZktWuNJICehBuQQaPzCnriaOGSQGrK0aH6QV6QAPzJe1F8JN8nqI8Ol3JKeiHN65Yb6R1iCsF8HVY8hSYi59xc7cM9hXCSABil7_dg/?imgmax=800)

![clip_image002[10] clip_image002[10]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEjJkyHxUG4tfEtiJpgHoT0zcj_NO__nuFXmtU6Yezqy92i9ezWkgaVKePgqiNHVko06KWnpa466k0piWsYtpN_lZAA2VllcOOhXl0b7f9EAMq5qTKYo1Lrl84YqihS0eg-GOiA4TJD-bw/?imgmax=800)

0 comments